I’m starting a couple of posts with detailed explanations and solutions for my Authenticode Challenge. Let’s start with a solution using standard tools.

If you’re a bit into cryptography, you know that the textbook attack on RSA public-key cryptography is integer factorization. Long keys are used to thwart this attack, because no efficient method has been found to factor large integers within an acceptable time and cost. While researching Authenticode, I asked myself this question: assume you’ve solved the factorization problem, how exactly would you forge a new digital signature for a patched executable?

I worked out a method, and then got the idea to turn this into a difficult puzzle for you, i.e. a real challenge. But to do that, I had to find a way to make the integer factorization a non-issue for the puzzle. My first solution, using a very small key, was a dead-end. First the key had to be large enough to allow me to generate a certificate (about 360 bits long), but then the signcode procedure didn’t work. I figured out that the key had to be at least 512 bits for Authenticode to work. But a 512 bits key would take too long to factorize… Read on to find out how I solved this.

Solution 1

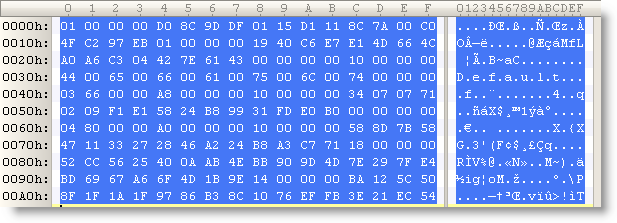

This solution takes mostly place on a Linux box. The first thing we have to do is recover the private key…

1) Get the authenticode challenge file ac.exe

2) Extract the PKCS7 Authenticode signature with my digital signature tool:

disitool.py extract ac.exe ac.exe.pkcs7

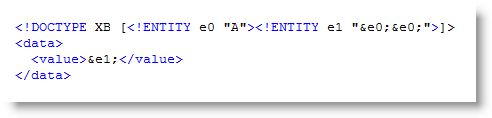

3) Dump the information in the pkcs7 file with openssl:

openssl pkcs7 -in ac.exe.pkcs7 -inform DER -text -print_certs > ac.exe.pkcs7.text

The public key is composed of the Modulus and the Exponent.

4) Lets extract the modulus from the certificate with this command:

openssl x509 -modulus -in ac.exe.pkcs7.text

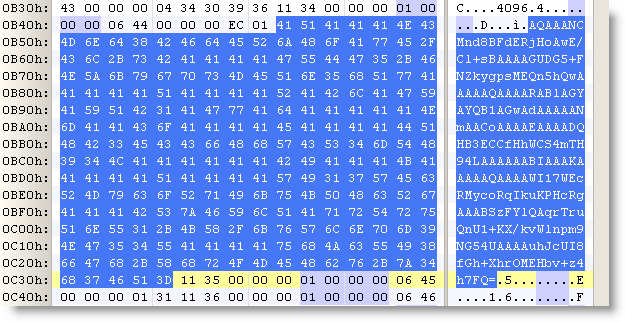

Modulus=D0EA1ABA978DF0065B2009F75C846F28B04ED5143B237B3FC24272245ADE837EFE0271E1A2854E0C81BA9F70A83AD86D47B0EACD062BC15BC61A99DC83124EC9

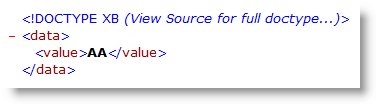

The modulus N is an integer that is the product of 2 prime numbers, P and Q (P and Q are kept secret). Integer factorization will allow you to recover P and Q, and hence produce the private key. There are several algorithms and tools to factorize integers, I’ll just point you to a didactic cryptography tool I mentioned before: Cryptool. But because I’m using a 512 bit modulus, factorization will take a long time, and I wanted to avoid this. So lets do something else.

5) Convert the modulus from a hexadecimal representation to a decimal representation, for example with Python:

python -c 'print 0xD0EA1ABA978DF0065B2009F75C846F28B04ED5143B237B3FC24272245ADE837EFE0271E1A2854E0C81BA9F70A83AD86D47B0EACD062BC15BC61A99DC83124EC9'

The modulus N in decimal representation is:

10941738641570527421809707322040357612003732945449205990913842131476349984288934784717997257891267332497625752899781833797076537244027146743531593354333897

6) Search for this number with Live Search (Google will not accept such a large search term):

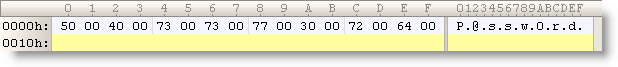

To spare you the long factorization time, I used a 512 bit key that has already been factorized: RSA-155 (this is the first 512 bit key to be factorized and was a landmark result in integer factorization).

Thus we have:

P = 102639592829741105772054196573991675900716567808038066803341933521790711307779

Q = 106603488380168454820927220360012878679207958575989291522270608237193062808643

Next post will explain in detail how to use P and Q to generate a new Authenticode signature…